Table Of Contents

By combining the power of docker and python I can create an analytics platform that will always run and is not dependent on the versions of python or anything other I run on my laptop.

I will show how easy it is to setup jupyter notebooks and use them for analytics. And how easy it is to publish an analysis to this blog. Docker gives me at least two benefits, 1. I can be sure that when I start my docker image the analysis will always run because docker makes sure the versions of all the software are the same. 2. If my laptop is not enough to run an analysis I can deploy the same docker image to a much more power full computer in a cloud.

Setting up and running

First I need a Dockerfile with the specification of the environment I want to run, it only contains two lines.

FROM sachinruk/ml\_classRUN conda install -y quandl

Thanks to Sachin Abeywardana which already setup a docker image with python and jupyter installed. I only needed to install quandl since I need access to financial data.

I like to use docker-compose to run my docker images. To do this I need one more file and then I can start the platform.

docker-compose.yml

version: '2'services:app:build: .volumes:- ./:/notebookenvironment:KERAS_BACKEND: tensorflowports:- 9000:8888

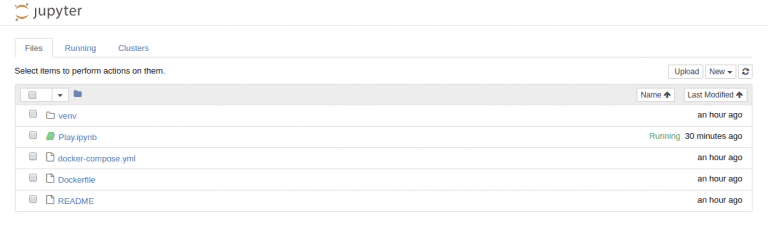

When I run the command “docker-compose up” the service will start up and I can access it in my browser on http://localhost:9000

It then looks like this:

Using jupyter

To use the platform you would need to have a good understanding of python since that is the programming language that powers it. To get going I have created a sample notebook that implements a linear regression on stock data.

When using the notebook it actually looks like the view above. All the fields are editable. I particularly like that I can write pretty documentation alongside the code, it even supports math expressions that will be pretty-printed.

I’m going to use this platform for future analysis so check the blog once in a while for interesting analysis.

You can download the full project jupyter.

Share

Related Posts

Legal Stuff